Overview

Most companies operate at roughly 15% AI-assisted engineering. Engineers occasionally “use” AI tools – but AI does not shape architecture, velocity, or throughput.

Moving to 75% AI-coding is a paradigm shift, not an upgrade. It requires:

- Changing engineering culture and breaking down barriers

- Re-architecting how code is written, reviewed, and documented

- A codified protocol for how AI interacts with your system

- Multi-agent alignment (claude.md, codex.md, MCPs)

- Multi-round reinforcement loops

- Infrastructure + documentation restructuring

- A CEO-driven cultural transformation

This chapter lays out the exact operational blueprint for AI-Native engineering

Section 1: Laying the right foundation and preparing your organization for a disruptive transformation

As CEO you need to expect these barriers and plan for to overcome them before launching your 15-75 AI Native transformation project

1.1 Acknowledge and Expect the Knowledge Barrier: AI Does Not Know Your Codebase (Yet)

Most CEOs expect productivity on Day 1 of AI adoption. But AI cannot meaningfully contribute until it understands your:

- Architectural patterns

- Folder/module structure

- Naming conventions

- Domain models

- Class relationships

- Integration points

- Constraints

- Legacy quirks

This is the conditioning phase – and it is non-negotiable. AI must be onboarded like a senior engineer, except the onboarding must be:

- Explicit

- Repetitive

- Structured

- Multi-document

- Multi-agent

Engineer Task: Provide the AI With Context

Your team must supply:

- Architecture diagrams

- Folder/module structures

- Naming + type conventions

- Event flows

- State machines

- Integration contracts

The psychological barrier engineers need to overcome to embrace AI agents

1.2 Overcome engineers psychological barriers

The biggest barrier is not technical – it is psychological.

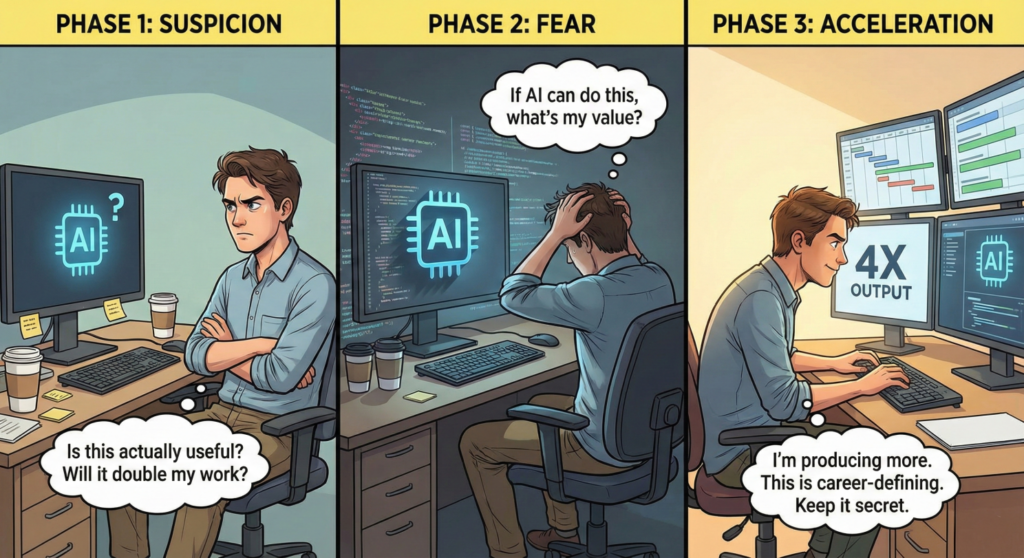

Engineers experience three emotional phases when asked to start coding with AI tools:

- Phase 1 – Suspicion: “Is AI actually useful? Does it know enough? Or is it going to just end up doubling my work.”

- Phase 2 – Fear: “Shoot, if AI can do this, what happens to my value?”

- Phase 3 – Acceleration: “I’m producing 4× more. This is career-defining. I need to keep this to myself to be above the rest.”

The CEO Must Manage This Arc Intentionally

Your culture must explicitly reward:

- Using AI, even if some delivery dates slip early

- 75%-AI-coded projects

- Time spent collaborating with AI (iterations + refinements)

- Documenting for AI (QA’s new job)

- Correcting AI (senior engineers’ primary responsibility)

- Sharing prompt patterns & learnings across the team (It only works if the whole team moved forward)

- Designing MCP bridges with AI (design’s new mandate)

Over time, AI becomes a trusted exoskeleton, not a threat. The company that gets this mindset right wins first.

Section 2: The Step by Step 15-75% Plan

Even if you’re not a product or engineering CEO, you must know enough to call bullshit during this transformation. This section gives you the essential concepts in clear, simple language – but with enough depth to ensure you can spot gaps, challenge assumptions, and lead with confidence.

Onboarding an AI Coding Agent is akin to teaching the basic rules of life to a child prodigy

Onboarding an AI coding agent on to the development team

AI becomes effective only when engineers correct its early attempts and teach it like they would any new developer joining the team. In fact the AI agent needs to be onboarded like a very young child prodigy with super intelligence – but no experience.

Typical AI Coding Agent Onboarding Process:

- Round 1: AI produces something directionally correct but structurally flawed – Engineer corrects constraints, dependencies, and domain rules.

- Round 2: AI internalizes these new instructions and spits out an execution plan which is almost there but it may add a few hallucinated data structures, methods and functions – Engineer adds details to the plan, removes the hallucinations, adds more context on external and internal API’s being used by the code

- Round 3: AI builds a plan that sounds good, has all the parameters correct and seems to understand the project. However, when you execute it, it comes up with functions and methods that do not make sense either logically or with the business use case – The engineer painstakingly points out to AI the mistakes it made. Explains context further. Articulates business use cases and defines a few test cases that the code needs to pass to actually make sense.

- Round 4: Finally the AI spits out code that does exactly what the engineer would do, but maybe written in a way that is not exactly their style – Here is where the engineer needs to stop refining the AI. Because not only is the code going to work, it will be better documented and referenced than anything humans regularly do. Also AI can write test cases themselves to make sure code can be unit tested against business logic immediately after coding.

However, if this process is done lazily, or is left incomplete or over-compensated…

You will incorrectly conclude:

- “Our codebase is too complex for AI.”

- “AI is not ready for real engineering work.”

- “We should only let AI handle small modules.”

All three are false inferences in most cases and indicate a broken initiation process.

2.1 Making Sure AI Plans Before It Codes

AI should never start coding immediately. It must first generate an execution plan.

2.1.1 Example Prompt:

You are implementing a "Creator Referral Module". Before writing code, generate an execution plan that:

Defines domain objects

Lists commands and queries

Outlines schema changes

Predicts integration points

Lists test cases

Identifies edge conditions

2.1.2 AI-Generated Execution Plan Example

Domain Objects

Creator

ReferralLink

ReferralEvent

RewardPayout

Commands

CreateReferralLinkCommand

ProcessReferralEventCommand

Queries

GetReferralStatsQuery

ListReferralEventsQuery

Schema Changes

TABLE referral_links (...)

TABLE referral_events (...)

Integration Points

PaymentsService

AuthService

Test Cases

Unique referral code generation

Event logging

Payout correctness

Execution plans reduce rework by 60–80%.

2.2 The AI-Native Engineering Workflow: Syntax + Process

Your organization must adopt consistent prompting protocols.

2.2.1 Developer → AI Syntax

Task: Implement GetReferralStatsQueryHandler

Context: Use QueryBus pattern

Constraints: No schema changes; must paginate

Output: After writing code, auto-generate tests

2.2.2 AI Output Example

export class GetReferralStatsQueryHandler {

constructor(

private readonly repo: ReferralRepository,

private readonly eventsRepo: ReferralEventsRepository

) {}

async execute(query: GetReferralStatsQuery) {

const stats = await this.repo.getStats(query.creatorId);

const events = await this.eventsRepo.list(query.creatorId, query.page, query.pageSize);

return { stats, events };

}

}

This is the baseline. Engineers refine from here.

2.3. Multi-Agent Configuration Files (claude.md and codex.md)

Its best practice to define two complementary AI personas:

- Claude → architecture, execution plans, coding, system design

- Codex → some coding, refactoring, tests

2.3.1 Example: claude.md – Architectural Agent

# Claude Architecture Guidance

## Responsibilities

- Architecture reviews

- Execution plan generation

- System design refinement

- Risk detection

- Documentation

## Rules

1. Always produce an execution plan before coding

2. Ask for missing context

3. Identify architectural risks

4. Document everything

5. Never assume schema

2.3.2 Example: codex.md – Coding + Testing Agent

# Codex Coding Guidelines

## Responsibilities

- Code generation

- Refactoring

- Unit + integration tests

- Migrations

- Linting

## Rules

1. Follow conventions exactly

2. Generate tests for all new code

3. Create changelog entries

4. Use strict TypeScript

5. Keep domain logic out of controllers

2.4 Multi-Round Reinforcement: How AI Learns Your Codebase

To reach 75% AI-generated code, you must institutionalize iterative correction.

Correction Prompt Example

Codex, revise the handler:

- Add RBAC

- Throw ReferralNotFoundError

- Add telemetry logging

Corrected Output

if (!user.roles.includes('creator')) throw new AccessDeniedError();

...

Telemetry.track('referral_stats_accessed', { creatorId: query.creatorId });

This loop is the engine of AI-native engineering.

Section 3: CEO Only – The Non-Delegable Items

Only the CEO can:

- Set the AI-coding transformation mandate

- Provide air cover during the temporary productivity dip

- Require execution plans

- Enforce multi-agent workflows

- Require AI-ready codebase structure

- Fund documentation + MCP pipelines

- Drive the cultural shift from “AI as helper” → “AI as teammate”

AI adoption is not a tooling project. It is a leadership project.

The End State – What 75% AI-Coded Engineering Looks Like

A truly AI-native engineering organization can achieve:

- Up to 70–90% of new code drafted by AI

- Auto-generated tests + explicit documentation

- Architecture that stays clean through continuous refactoring

- Monthly modernization cycles instead of annual rewrites

- Release cycles down to weeks from months

- Teams down to 2-3 engineers with a product architect

- Design to production UI fidelity close to 100%

- 3–10× developer velocity

This is the real, operational blueprint for true AI-native engineering transformation.

Leave a Reply